Highlights

- Samsung may rename its foldable lineup with the flagship launching as Galaxy Z Fold 8 Ultra, while the wide-folding model could be called Galaxy Z Fold 8.

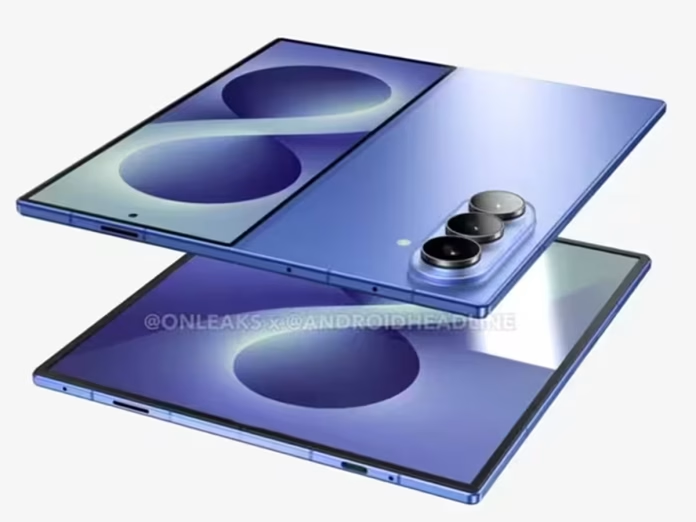

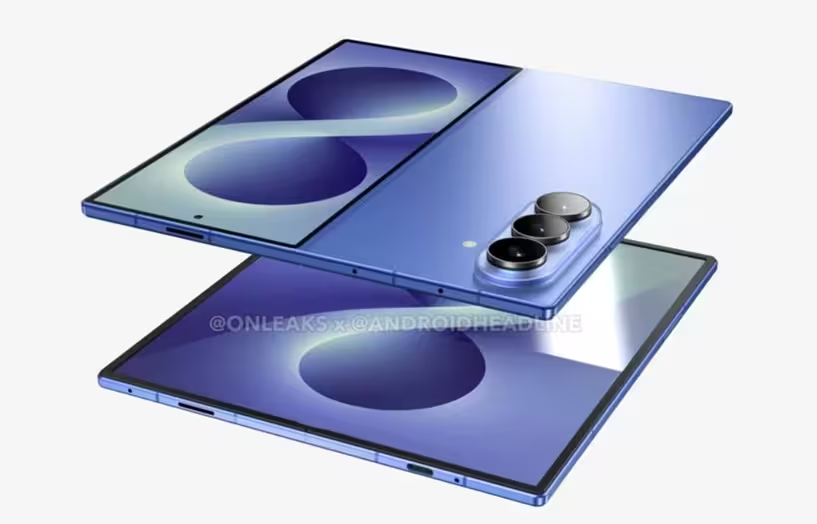

- Leaked screen protector images of the Galaxy Z Fold 8 Wide show squared corners, slim bezels and a centred hole-punch selfie camera.

- The Galaxy Z Fold 8 Wide may feature a 5.4-inch cover display, 7.6-inch 4:3 inner display, Snapdragon 8 Elite Gen 5 chip, 4,800mAh battery and dual 50MP cameras.

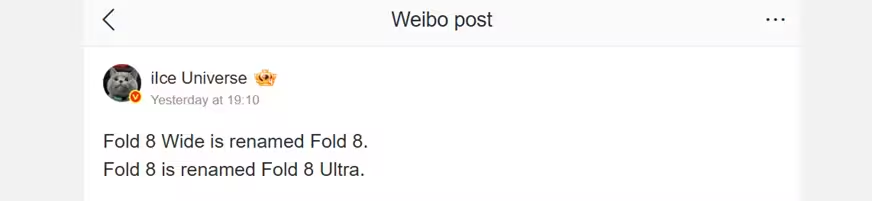

Samsung is expected to unveil its next-generation foldable smartphones including the Galaxy Z Fold 8 and Galaxy Z Flip 8 lineup in July. Alongside these devices, the company is also rumoured to launch its first wide-folding smartphone. While Samsung has not officially confirmed the upcoming foldables yet, multiple leaks and reports have already surfaced online. A fresh leak now suggests that the South Korean brand could adopt a new naming strategy for its book-style foldable series.

Samsung Galaxy Z Fold 8 Series Monikers Tipped

According to tipster Ice Universe on Weibo, Samsung may rename its upcoming foldable lineup. The tipster claims that the company’s flagship foldable phone will launch as the Samsung Galaxy Z Fold 8 Ultra. Meanwhile, the previously rumoured wide-folding model is now expected to debut simply as the Samsung Galaxy Z Fold 8.

Earlier rumours had suggested that the two devices would be called the Samsung Galaxy Z Fold 8 and Samsung Galaxy Z Fold 8 Wide.

However, Samsung now appears to be repositioning the lineup by introducing the Ultra branding to its premium foldable smartphone.

If true, this would mark the first time Samsung uses the Ultra branding for its Galaxy Z Fold series. The company first introduced the Ultra branding in its flagship Galaxy S lineup with the Samsung Galaxy S20 Ultra back in 2020.

Samsung Galaxy Z Fold 8 Wide Screen Protector Image (Leaked)

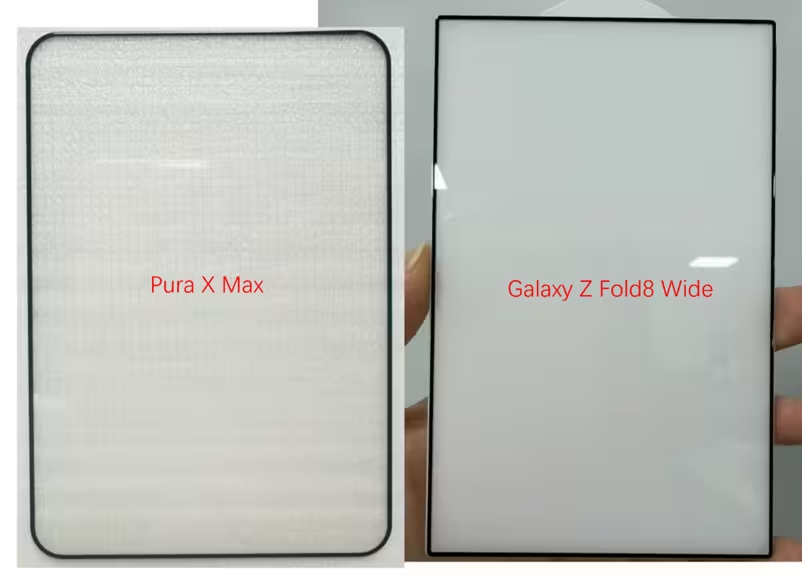

In a separate leak by the same tipster (Ice Universe) on X, we now have an image of the alleged screen protector for the Samsung Galaxy Z Fold 8 Wide. The leaked image offers a glimpse at the cover display design.

Galaxy Z Fold8 Wide! pic.twitter.com/p8uXI8ogLo

— Ice Universe (@UniverseIce) May 23, 2026

The leaked image suggests that the phone could feature squared-off display corners along with relatively slim bezels.

The tipster even overlaid the screen protector image with another device for comparison purposes.

If we add a screen to this tempered glass protector for the Samsung Galaxy Z Fold8 Wide, its cover screen would basically look like the image on the right.

Of course, the real device will not have bezels this narrow, and it will also have a punch hole. pic.twitter.com/hkspJKo9vR

— Ice Universe (@UniverseIce) May 23, 2026

The cover display is also shown with a centred hole-punch cutout, which is expected to house the selfie camera.

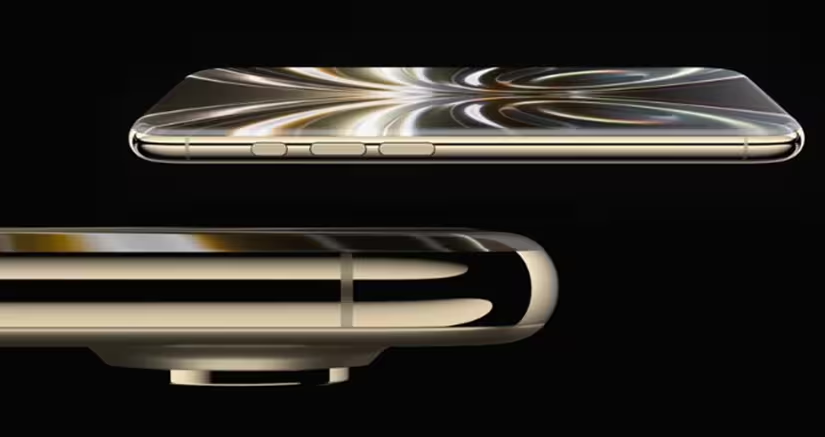

The latest leak further strengthens previous reports suggesting that Samsung’s wide-folding phone will offer a more traditional smartphone-like experience when folded, while opening into a nearly square-shaped 4:3 aspect ratio inner display for improved productivity.

Based on the comparison with the Huawei Pura X Max, Samsung’s upcoming foldable appears to be narrower when folded shut and wider when unfolded.

HUAWEI Pura X Max and Galaxy Z Fold8 Wide. pic.twitter.com/7CSBIOpsYq

— Ice Universe (@UniverseIce) May 23, 2026

Samsung Galaxy Z Fold 8 Wide – Specifications (Expected)

According to leaks and rumours, the Samsung Galaxy Z Fold 8 Wide may feature a 5.4-inch cover display and a 7.6-inch inner display with a 4:3 aspect ratio.

The foldable smartphone is expected to be powered by the Qualcomm Snapdragon 8 Elite Gen 5 processor. It could pack a 4,800mAh battery with support for 45W fast charging.

In terms of cameras, Samsung may opt for a dual rear camera setup consisting of a 50-megapixel primary sensor and a 50-megapixel ultra-wide camera. Reports claim that the company could skip the telephoto camera in order to maintain a slimmer design profile.

The smartphone is also rumoured to weigh around 200 grams.

Galaxy Unpacked Event Expected on July 22

As for the launch timeline, a recent report claimed that Samsung may host its next Galaxy Unpacked launch event on July 22 in London. During the event, the company is expected to introduce the Samsung Galaxy Z Fold 8 Ultra, the wide-folding Samsung Galaxy Z Fold 8, and the Samsung Galaxy Z Flip 8.

However, Samsung has not officially confirmed the launch event or the upcoming foldable lineup yet.

Source –

FAQs

Q1. What new naming strategy is Samsung expected to use for its foldables?

Answer. Leaks suggest the flagship will be called Galaxy Z Fold 8 Ultra, while the wide-folding model may debut as the Galaxy Z Fold 8.

Q2. What design details were revealed for the Galaxy Z Fold 8 Wide?

Answer. Leaked screen protector images show squared corners, slim bezels, and a centred hole-punch selfie camera, hinting at a more traditional folded design.

Q3. When is Samsung expected to launch the Galaxy Z Fold 8 series?

Answer. Reports claim Samsung may host its Galaxy Unpacked event on July 22 in London, unveiling the Galaxy Z Fold 8 Ultra, Fold 8 Wide, and Flip 8.